current location :home>News

The International Solid-State Circuits Conference (ISSCC 2026) was recently held in San Francisco, USA. ISSCC is widely recognized by both academia and industry as the premier conference in the field of integrated circuit design, often referred to as the "Olympics of Chip Design." Established in 1953, it has historically served as the launchpad for the world's most cutting-edge IC technologies, attracting over 3,000 participants from global industry and academia each year. Each accepted paper represents the most advanced research results in the chip domain.

The research group led by Professor Longyang Lin at the School of Microelectronics, Southern University of Science and Technology (SUSTech), has achieved significant progress in intelligent vision chips, with two latest research results accepted for presentation at ISSCC 2026. One paper presents an in-memory computing intelligent vision SoC for edge intelligent vision applications, titled *“A 55nm Intelligent Vision SoC Achieving 346TOPS/W System Efficiency via Fully Analog Sensing-to-Inference Pipeline,”* developed collaboratively by SUSTech, ILIKEYS, and RERAM. The other paper presents a self-healing image sensor chip for space applications, titled “A Radiation-Hardened Self-Healing CMOS Imager with Online Pixel/Logic Annealing and Tile-Adaptive Compression for Space Applications.” Assistant Professor Longyang Lin is the corresponding author for both papers, and the School of Microelectronics at SUSTech is the primary affiliated institution. These two works have respectively broken through the analog-to-digital conversion energy efficiency bottleneck in edge vision systems and the radiation damage and communication bandwidth bottlenecks in space missions, achieving breakthrough innovations in intelligent vision chip technology.

These research efforts were supported by the National Natural Science Foundation of China (NSFC), Guangdong Province Projects, the SUSTech-ILIKEYS Joint Laboratory, and the SUSTech-Cunhou Technology Joint Laboratory.

Paper 1: Fully Analog End-to-End Intelligent Vision Chip Breaks the ADC Energy Efficiency Bottleneck

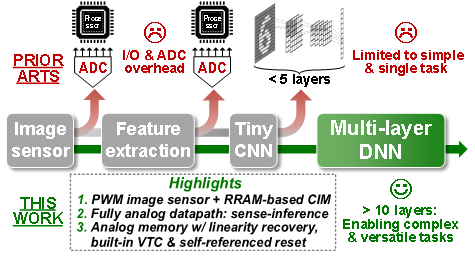

With the advancement of artificial intelligence, there is a growing demand for edge AI vision systems capable of handling complex tasks such as feature extraction and object detection. Traditional intelligent vision chips often employ a stacked architecture of sensors and digital processors. While this alleviates data transfer pressure to some extent, it still heavily relies on power-hungry analog-to-digital converters (ADCs). To overcome the power bottleneck, academia has proposed in-sensor or near-sensor computing techniques. However, existing solutions often handle only specific tasks and are limited by storage capacity and fixed hardware, making it difficult to support complex convolutional neural networks (CNNs). More critically, when processing multi-layer neural networks, frequent analog-to-digital and digital-to-analog signal conversions between computing nodes introduce significant latency and energy overhead, becoming a core technical bottleneck limiting the path towards high compute power and energy efficiency for edge vision chips.

Figure 1: Comparison of intelligent vision processing paradigms and highlights of this work

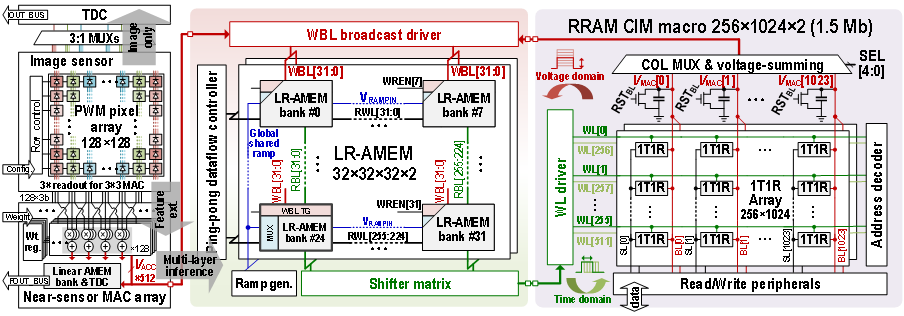

To address this, this research proposes a multifunctional intelligent vision SoC chip based on a "fully analog" computing paradigm (Figure 1, Figure 2), completely eliminating analog-to-digital conversion operations between the sensor and computing units, as well as between the layers of the neural network, for end-to-end vision tasks. The chip innovatively integrates a Pulse-Width Modulation (PWM) image sensor with an RRAM-based in-memory computing architecture. It introduces a core module—an analog memory circuit with built-in voltage-to-time converter and linear recovery capability (LR-AMEM). When running multi-layer networks, the time-domain pulses output by the sensor directly drive the in-memory computing array. The computed voltages are stored in the analog memory cells; subsequently, the built-in converter in the memory seamlessly restores the voltages to time-domain pulses, directly driving the computation of the next layer. This "time-voltage-time" pure analog signal flow not only avoids power-hungry ADCs and quantization noise but also leverages underlying circuit characteristics to effectively cancel the inherent nonlinearity of charge-domain RRAM analog in-memory computing. It achieves strong robustness against process variations, temperature, and voltage fluctuations without requiring additional calibration circuits.

Figure 2. Architecture diagram of the fully analog-domain intelligent vision SoC chip

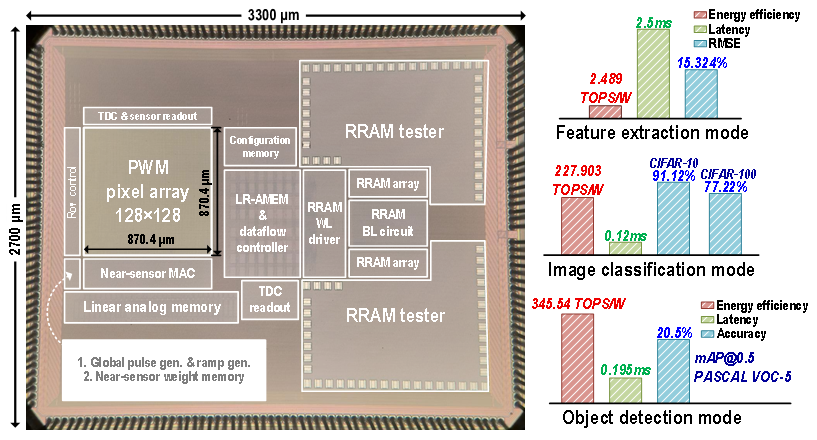

This solution was validated through a chip fabricated in 55nm CMOS technology (Figure 3). Measurement results show that the chip achieves an extremely low sensing energy consumption of 11 pJ/(pixel·frame), a peak multiply-accumulate energy efficiency of 8,791 TOPS/W, and a system-level end-to-end energy efficiency of 346 TOPS/W. It can efficiently and with low latency perform various edge vision tasks, including feature extraction, image classification, and object detection. Compared to existing intelligent image sensors, this fully analog vision chip improves system-level energy efficiency by a factor of 75.6 to 966. This work fully validates the feasibility and immense potential of fully analog signal chains for complex vision computing at the edge, providing a highly competitive technical solution for future ultra-low-power, fully integrated edge intelligent vision systems.

Figure 3: Chip micrograph and performance on three vision tasks

Paper 2: Self-Healing CMOS Image Sensor for Space Applications Breaks the Dual Bottlenecks of Radiation Damage and Bandwidth

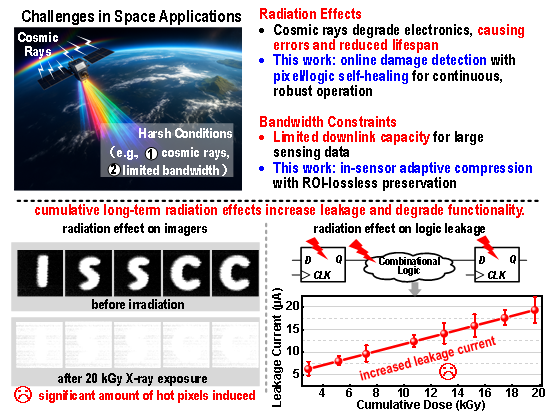

Space exploration and Earth observation missions rely on highly reliable CMOS image sensors. However, in the harsh radiation environment of space, the pixel array and logic circuits of the chip are highly susceptible to hardware damage from cumulative radiation effects, such as "hot pixels," increased leakage current, and timing errors, ultimately leading to severe degradation of image quality. Traditional radiation hardening techniques (e.g., special device layouts or hardware redundancy) often only provide passive protection, incurring significant area overhead and failing to actively "heal" physical damage that has already occurred. Although previous research has introduced thermal annealing techniques to repair damaged devices, these schemes typically require interrupting image capture for repair and cannot cover damage to logic circuits. Simultaneously, the massive amount of image data generated by spacecraft in orbit far exceeds the downlink bandwidth limits of satellites. Therefore, developing an image sensor that can "self-heal while working" under intense radiation and intelligently compress data has become a major challenge in the field of space vision detection.

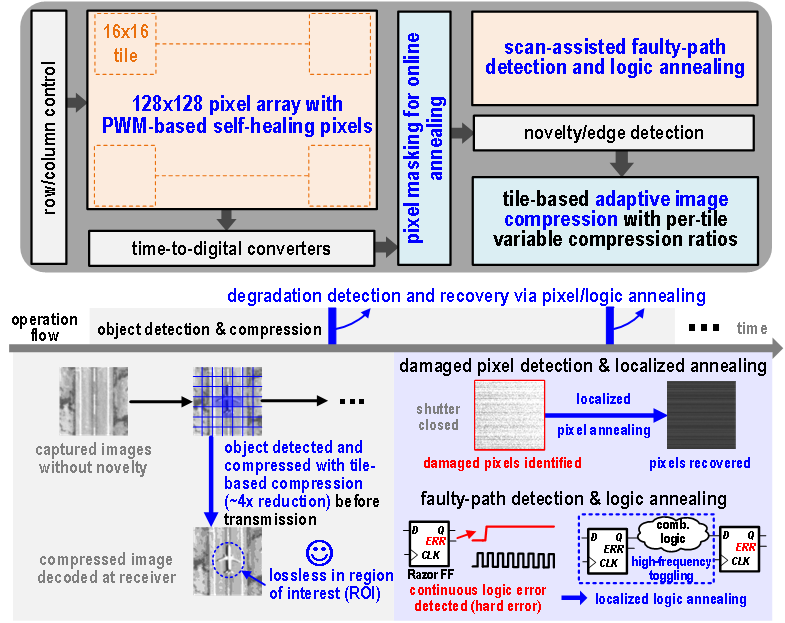

Figure 4: Challenges of space applications and contributions of this work

To address the above challenges, this research proposes a radiation-hardened self-healing CMOS image sensor (Figure 4, Figure 5). The innovation of this chip lies in the integration of "local online thermal annealing" repair techniques for both pixel circuits and digital logic circuits, along with a tile-based adaptive compression engine. On the pixel side, the chip employs a special 6T PWM pixel circuit design. When the system detects abnormal pixels damaged by radiation, it applies a forward bias to induce localized high-temperature annealing, thereby mitigating radiation damage caused by lattice displacement defects and trapped charges. Notably, the chip does not need to stop during repair; instead, it uses a "pixel masking" technique to intelligently interpolate and compensate using data from neighboring pixels, enabling continuous imaging during online repair. On the logic side, the system uses enhanced razor flip-flops to precisely capture timing error paths caused by cumulative radiation-induced timing violations. It then generates localized Joule heat through a high-frequency clock to precisely anneal the damaged logic circuits, with minimal impact on the frame rate. Concurrently, the chip's built-in adaptive image compression engine assesses scene complexity in real-time, losslessly preserving key targets or complex edges (regions of interest) while applying aggressive compression to flat background regions.

Figure 5: Architecture and workflow of the radiation-hardened self-healing CMOS image sensor

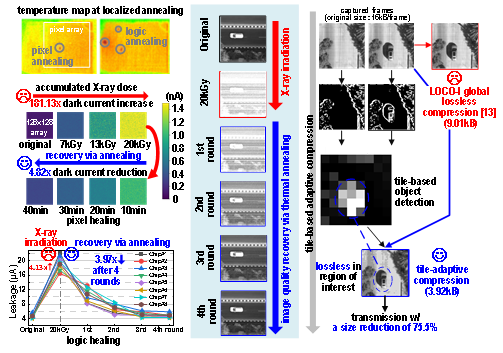

Experimental results in extreme environments (Figure 6) show that after exposure to intense X-ray radiation of up to 20kGy, the chip's initial dark current increased dramatically by nearly 181 times, severely degrading the image. However, after initiating several rounds of online annealing repair, compared to the damaged state at 20kGy, the leakage currents of the pixel and logic circuits decreased significantly by factors of 4.82 and 3.97, respectively, and the image quality was notably restored to near its original clarity. Furthermore, the adaptive compression technology effectively compresses the image data volume by approximately 75% while preserving key details. This achievement successfully breaks through the dual technical bottlenecks of space radiation damage and limited communication bandwidth, providing a powerful vision chip solution for future space exploration missions demanding ultra-long endurance and extremely high reliability.

Figure 6: X-ray radiation healing and adaptive image compression test results

Related Papers

Z. Yang, H. Yu, X. Liu, Z. Kong, H. Li, L. Ran, Z. Lyu, X. Feng, L. Zhao, Y. Li, J. Li, F. Zhou and L. Lin, "A 55nm Intelligent Vision SoC Achieving 346TOPS/W System Efficiency via Fully Analog Sensing-to-Inference Pipeline," *2026 IEEE International Solid-State Circuits Conference (ISSCC)*, San Francisco, CA, USA, 2026, pp. 126-128, doi: 10.1109/ISSCC49663.2026.11409209.

Link: https://ieeexplore.ieee.org/abstract/document/11409209

Q. Cheng, Z. Yang, H. Li, Q. Li, Z. Kong, G. Niu, Y. Liang, J. Li, J. Yoo, M. Hashimoto and L. Lin, "A Radiation-Hardened Self-Healing CMOS Imager with Online Pixel/Logic Annealing and Tile-Adaptive Compression for Space Applications," *2026 IEEE International Solid-State Circuits Conference (ISSCC)*, San Francisco, CA, USA, 2026, pp. 390-392, doi: 10.1109/ISSCC49663.2026.11408995.

Link: https://ieeexplore.ieee.org/abstract/document/11408995